Project 05: ClothGNN Demo: Ultra-Lightweight Real-Time Cloth Dynamics

Project code: projects/05-clothgnn__project-space

This project is best understood as a three-step story:

- Without my model: a spring-mass cloth simulation produces the ground-truth rollout on a

32x32mesh. - With my model: a tiny recurrent GNN (Encoder→GRU→Decoder) predicts the same dynamics from a single forward pass per frame.

- Final Taichi demo: the learned rollout is rendered in a polished GGUI presentation with height-based coloring and honest comparison to the physics baseline.

The important point is not the presentation quality. The important point is that this model has 49K parameters and runs at 1451 FPS — fast enough to serve as a real-time inner loop, not just an offline approximation.

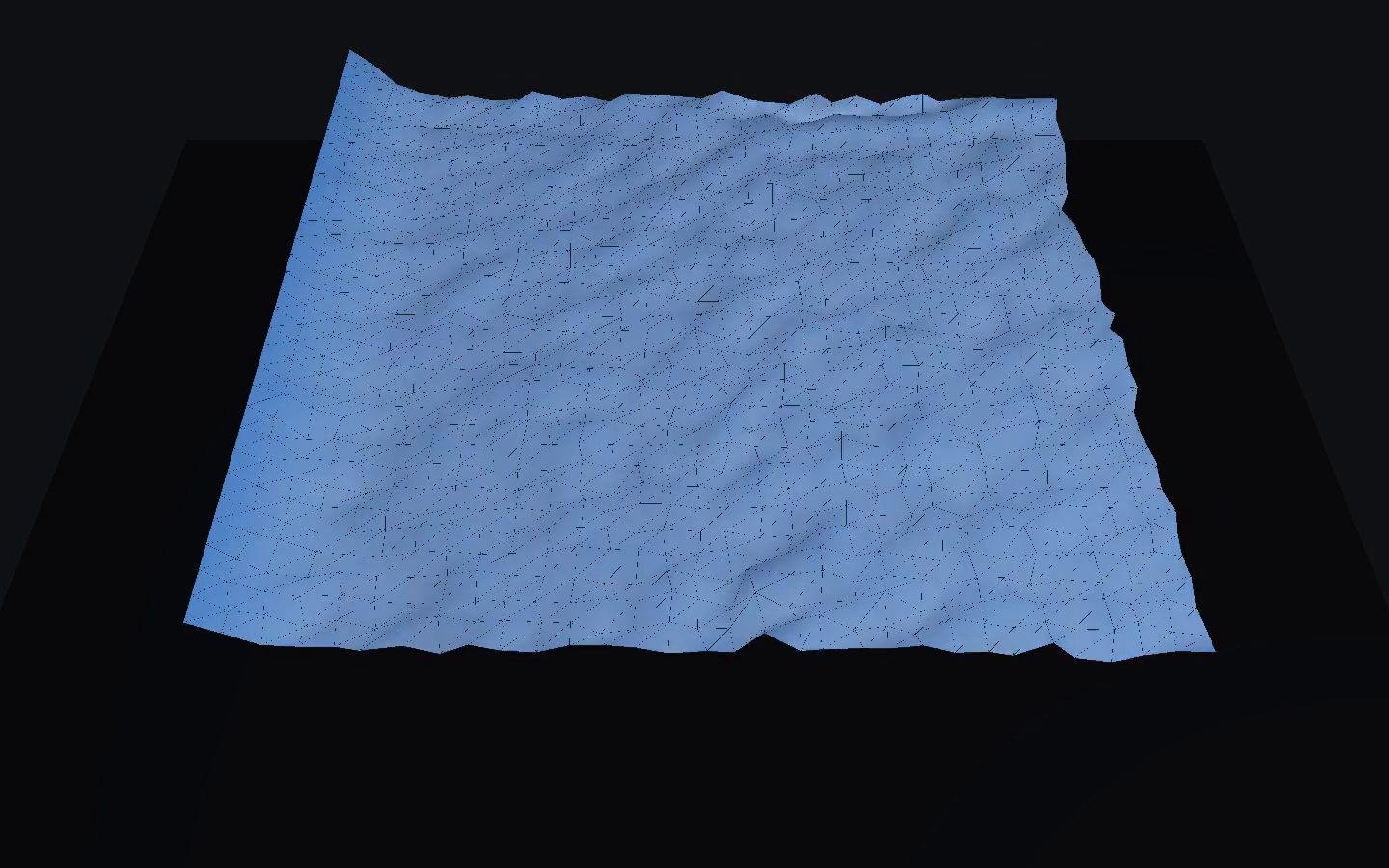

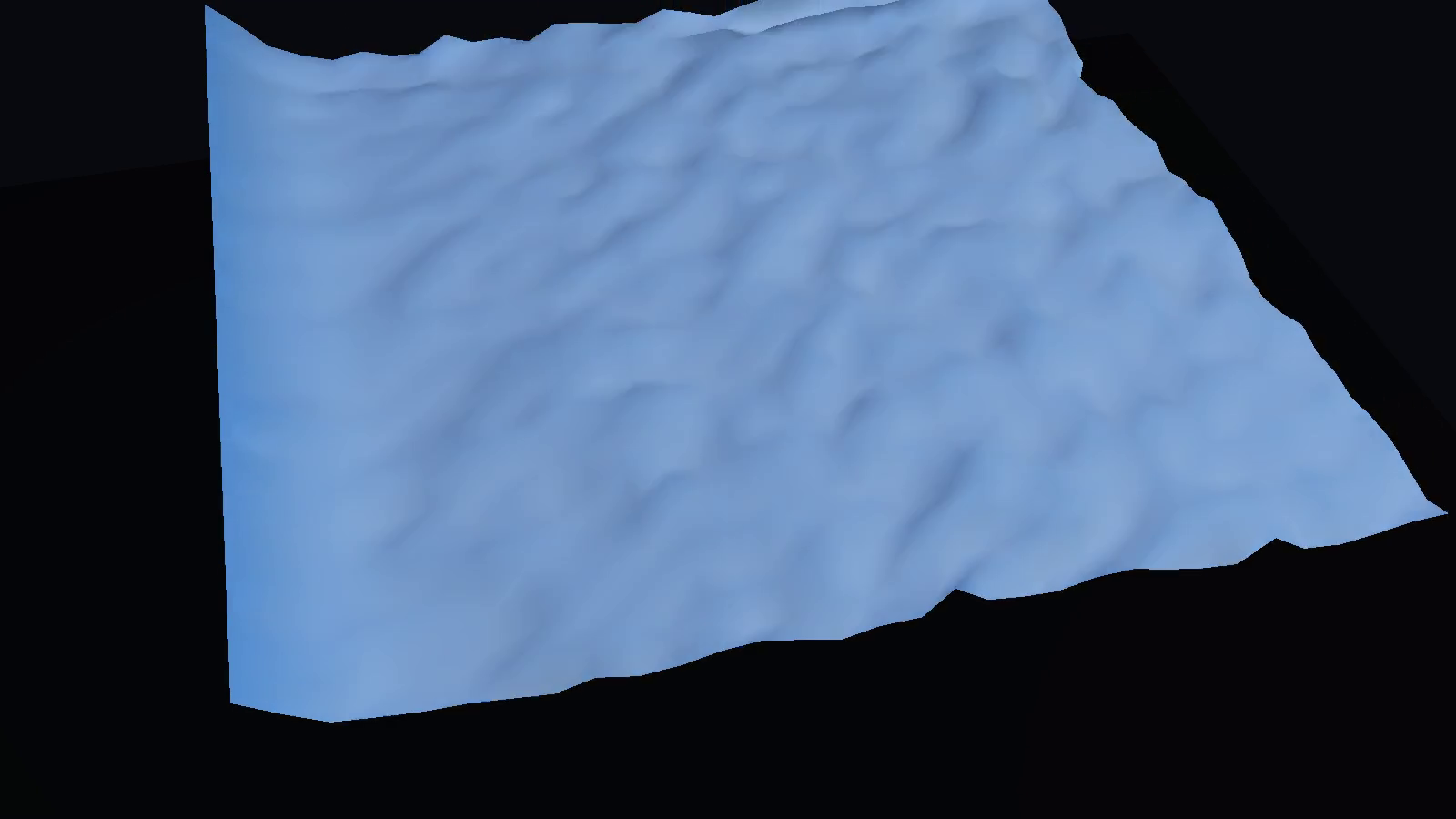

Without My Model vs With My Model

The comparison that matters is not "ugly render vs polished render." It is "physics simulation vs learned surrogate."

Both sequences use the same cloth topology:

1024vertices on a32x32grid- fixed top edge

- identical face connectivity for the baseline and learned playback

|

Without My Model Spring-mass physics baseline — Hooke's law forces, 10 substeps, explicit integration. |

With My Model ClothGNN autoregressive rollout — single forward pass per frame from the same initial state. |

The left side is the target. The right side is the learned surrogate. What matters is that the GNN is preserving enough sag, drape, and short-horizon motion to stay legible as cloth dynamics rather than collapsing into noise.

The Speed Story

This is the unique angle of ClothGNN. The model is not just accurate enough — it is absurdly fast.

- 1451 FPS inference on an RTX 3090 Ti at 1024 vertices

- 49K parameters — the entire model fits in GPU L1 cache

- Single forward pass per frame — no substep iteration, no constraint projection

To put this in perspective: a cloth simulation running at 30 FPS is considered real-time for games. ClothGNN runs at 48× that speed. The model is small enough and fast enough to serve as a cloth dynamics process inside a real-time loop, not alongside it.

This is what makes the project interesting. Many learned surrogates are faster than their reference solvers but still too slow for real-time use. ClothGNN is in a different regime — it is fast enough that inference cost essentially disappears as a bottleneck.

The architecture that enables this is deliberately minimal:

- Encoder: MessagePassing layers that embed per-vertex features into a latent space

- GRU: A single-layer gated recurrent unit that carries temporal state

- Decoder: An MLP that maps latent states back to position deltas

No attention. No transformer blocks. No multi-scale hierarchies. Just graph convolutions, a recurrence, and a decoder.

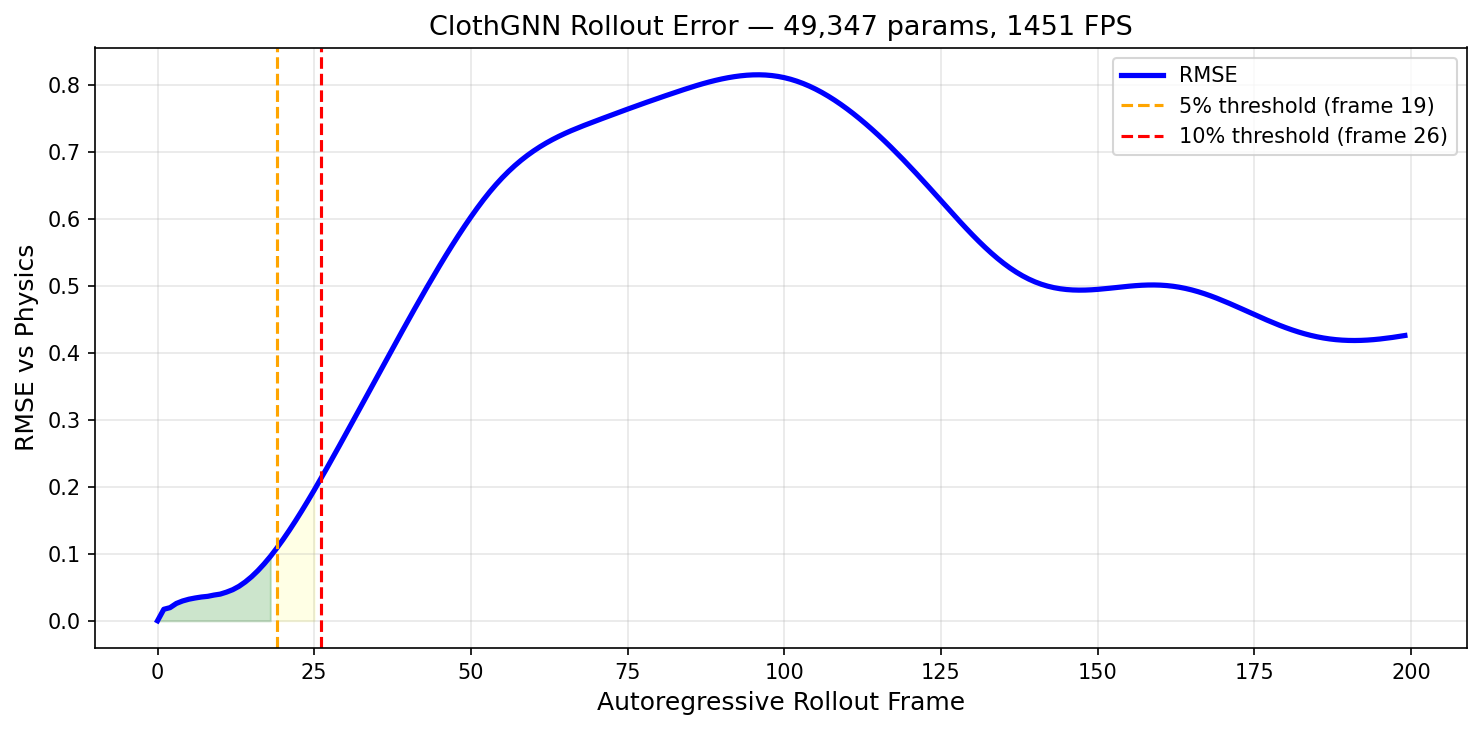

What The Numbers Say

The model is strong in the short horizon and honest about where it degrades.

- Training loss:

7.74e-5(MSE on next-step prediction across 10 training sequences) - Inference FPS:

1451on RTX 3090 Ti - Parameters:

49,347 - Trustworthy rollout (5% error):

19 frames - Trustworthy rollout (10% error):

26 frames - Final RMSE (frame 199):

0.426 - Mean relative edge error:

0.219

Per-frame RMSE during autoregressive rollout. The model stays below 5% error for ~19 frames.

The rollout horizon is the honest limitation. At 19 frames (5% threshold), the model is a strong short-horizon surrogate. Beyond that, autoregressive error accumulation causes drift. This is typical for recurrent rollout models — the "Learning to Simulate" noise injection technique (σ=0.003) extends the window but does not eliminate the fundamental compounding problem.

For use cases where short bursts of cloth dynamics matter more than long-term stability — interactive previews, game LOD, rapid prototyping — this trade-off is favorable. For offline simulation replacement, the rollout window would need to be longer.

Why This Matters

The value of ClothGNN is not that it simulates cloth. The value is how little compute it needs to do so.

A simulation becomes a forward pass

A physics cloth solver iterates: forces, constraints, substeps, collision checks. Each frame requires work proportional to the complexity of the simulation. ClothGNN replaces that entire pipeline with a single neural network evaluation. The cost per frame is fixed and tiny.

The model is deployable, not just trainable

49K parameters is small enough to deploy on mobile GPUs, edge devices, or as a background process in a game engine. This is not a research model that requires a data center. It is a model that could realistically ship.

Speed enables new use cases

At 1451 FPS, the model can be called multiple times per render frame. That opens up possibilities like:

- running multiple cloth instances simultaneously

- using the model as a fast preview while a slower solver runs in the background

- embedding cloth dynamics inside optimization loops

These are use cases that simply do not exist when inference takes 50ms per frame.

Final Taichi Demo

The final presentation renders the GNN rollout in Taichi GGUI with height-based coloring — warm amber where the cloth sags, cool blue where it stays high. The colormap makes the physics immediately legible without relying on wireframe or shading tricks.

Taichi presets

Research

|

Pitch

|

Dramatic

|

The Bigger Picture

Cloth dynamics surrogates matter because physics simulation is expensive and often over-precise for the task at hand. Many applications need "cloth-like motion" rather than "physically exact cloth." ClothGNN shows that a model small enough to fit in L1 cache can provide that.

The limitations are real — the rollout horizon is short, and the edge-length error is nontrivial. But the speed advantage is so large that it opens a qualitatively different design space. When inference is essentially free, the model stops being a simulation replacement and starts being a simulation primitive — something you can call inside loops, stack in parallel, or use as a first-pass estimate.

What Would Make This More Realistic

The current result is compelling as a speed-first cloth surrogate, but it is still not a deeply realistic cloth model in the stronger graphics or simulation sense of that phrase.

The core realism limit is that ClothGNN is inheriting a relatively simple spring-mass process and then running autoregressively on top of it. That is enough to produce legible cloth dynamics, but it is not enough to produce the kind of rich folds, structurally stable long-horizon motion, and material-specific behavior that people associate with higher-end cloth simulation.

Realism has to improve across the whole stack

For this project, realism is not one problem. It is a stack problem.

If I wanted ClothGNN to look materially more realistic, I would push on five layers at once:

- Reference physics: the baseline needs richer cloth behavior than a simple spring-mass solve.

- Spatial resolution: the cloth graph needs more vertices so drape, folds, and local curvature have room to exist.

- Training objective: the model needs to be rewarded for structural fidelity over longer rollout windows, not only next-step fit.

- Rollout stability: the autoregressive path has to tolerate its own prediction errors better.

- Presentation: the viewer should flatter the surface without hiding whether the dynamics themselves are weak.

If any one of those layers stays weak, the final result still reads as synthetic. Better shading does not fix weak motion. Better motion on a very coarse mesh still looks faceted. A denser mesh with unstable rollout still breaks under playback.

1. Make the baseline simulator physically richer

Right now the project is built on a simple spring-mass cloth process. That is a good engineering choice for speed, but it also puts a realism ceiling on the whole pipeline.

The baseline would become more realistic with:

- better bending behavior

- more realistic damping

- stronger stretch control

- clearer treatment of pinned constraints

- contact and collision handling beyond the simplest case

- more varied external forcing than a narrow wind pattern

The learned model can only inherit the realism that exists in the target process. If the baseline is simplified, the surrogate will faithfully learn that simplification.

2. Increase the mesh resolution without losing the speed story

The 32x32 mesh is a good deployment sweet spot, but it is still modest for cloth.

Moving upward to something like:

48x4864x64- or a small multi-resolution benchmark set

would help the surface read more like cloth and less like a coarse cloth proxy. Folds would localize better, the silhouette would look less blocky, and surface curvature would become more believable.

The key is to do this without accidentally erasing the project’s strongest differentiator. ClothGNN should still be presented as the model that is absurdly fast. So the right approach would be to show a speed/quality curve, not just blindly chase denser meshes.

3. Train for longer free-running stability

The current model is strongest over a short horizon. That is honest and useful, but it is also the main realism bottleneck.

To make the cloth feel more believable, the model should stay coherent further into rollout without softening, drifting, or structurally detaching from the baseline.

The most promising routes here are:

- stronger scheduled sampling

- more aggressive noise injection during training

- multi-step rollout losses

- curriculum on rollout length

- hidden-state regularization

- explicit consistency penalties on velocities and accelerations

This is likely the highest-leverage realism improvement after the baseline itself, because it directly widens the range of motion that can be shown honestly.

4. Preserve cloth structure more explicitly

RMSE alone is not enough for cloth. A model can have a decent positional average and still look wrong because stretch, bend, or local shape are off.

If I wanted the cloth to look more realistic, I would train and evaluate more explicitly against:

- edge stretch

- bend or curvature proxies

- pinned-vertex consistency

- normal consistency

- local velocity smoothness

- structural violations under rollout

That matters because cloth is visually unforgiving. Small structural errors compound into motion that feels fake even when the broad shape is roughly correct.

5. Broaden the training distribution

The current demo is strongest for one narrow class of cloth behavior. That is fine for a demo, but realism improves when a model behaves credibly across more than one carefully selected scenario.

Useful dataset expansion would include:

- different pin configurations

- different wind amplitudes and frequencies

- varied rest poses

- different stiffness and damping settings

- stronger perturbations

- short stress-test sequences

That would make the model feel less like a tuned clip machine and more like a reusable cloth surrogate.

6. Improve rendering only after the dynamics are stronger

Rendering still matters, but it should stay downstream of the dynamics.

Once the motion itself is stronger, realism could improve further with:

- better normals and slightly richer shading

- more subtle material response

- stronger floor grounding

- restrained shadowing

- mild display-surface smoothing where appropriate

But that should always remain secondary. A nicer render of structurally weak cloth is still structurally weak cloth.

If I compress the realism roadmap to one line, it is this:

The path to more believable cloth is richer baseline dynamics, denser simulated geometry, stronger free-running stability, and only then more polished presentation.

What Would Improve The Project Overall

If I step back from realism alone and ask what would make this a stronger project overall, the answer is broader than just “make the cloth prettier.”

The core center of gravity for ClothGNN is not realism by itself. It is speed. So the best project-level improvements are the ones that make the speed story more rigorous, more useful, and more clearly differentiated.

1. Turn the speed claim into a real frontier

The strongest version of this project is not one benchmark number. It is a clearly presented speed vs quality tradeoff.

That means adding experiments like:

32x32vs48x48vs64x64- parameter count vs rollout window

- FPS vs error curves

- different hidden sizes or recurrent depths

- speed comparisons against more baseline solver modes

That would make it much easier to show why this model matters rather than just stating that it is fast.

2. Add ablations that explain what actually works

Right now the post tells a good story, but the project would be more convincing with more proof.

The highest-value ablations would be:

- with and without noise injection

- one trajectory vs multiple trajectories

- one-step loss vs longer rollout loss

- edge loss on vs off

- different rollout thresholds for trustworthy playback

That would help show which ingredients are doing the real work and which ones are incidental.

3. Strengthen the deployment story

ClothGNN’s real differentiator is that it is small enough and fast enough to feel deployable.

So the project would improve meaningfully with:

- a mobile or laptop benchmark

- ONNX or TensorRT export validation

- a tiny engine-integration example

- memory footprint reporting

- latency measurements, not just FPS

That would make the “real-time inner loop” framing land much harder.

4. Build a stronger use-case matrix

This project becomes more valuable when it is attached to concrete use cases.

For example:

- interactive previews

- LOD cloth in games

- surrogate rollout inside optimization loops

- real-time prototyping tools

- many-instance cloth playback

Those use cases turn the model from “fast academic artifact” into “small system with clear product value.”

5. Improve the evaluation artifact set

The current metrics are enough for a blog post, but the project would be stronger with a more complete validation suite.

Useful additions would include:

- per-frame edge-stretch histograms

- longer rollout galleries

- failure-case examples

- sequence-by-sequence generalization summaries

- structural error plots, not just RMSE

That would make it easier to evaluate the model the way cloth should actually be evaluated.

6. Turn the post itself into a stronger technical artifact

The writeup could also carry more of the project’s real value.

To make the page stronger as a technical artifact rather than just a polished case study, I would add:

- a compact ablation figure

- a speed/quality chart

- one explicit failure-case strip

- one short methodology note on noise injection and rollout training

- one note on how the Taichi viewer reflects the trustworthy rollout window

That would make the post more defensible to a skeptical technical reader.

If I Were Continuing This Project

If I had another serious pass on ClothGNN, I would prioritize the work in this order.

Priority 1: keep the speed, widen the trustworthy window

This is the single highest-leverage improvement. If the model kept its current speed while staying coherent for materially longer, the whole project would move up a tier.

Priority 2: make the target dynamics richer

The next improvement would be a more physically expressive baseline so the surrogate has something better to imitate.

Priority 3: benchmark the deployment story properly

If this project really is about ultra-lightweight cloth dynamics, then proving portability and latency should be part of the core result, not a footnote.

Priority 4: push resolution carefully

I would only push mesh density in a way that preserves the speed-first identity of the project rather than accidentally turning it into a slower, less differentiated cloth surrogate.

Honest Current Limitations

The main current limitations are:

- the rollout window is still short

- the baseline physics is intentionally simplified

- the cloth is still modest in resolution by higher-end simulation standards

- structural preservation is useful but not yet strong enough to make this a long-horizon replacement for cloth simulation

- the project is strongest as a speed-first surrogate, not a realism-first cloth model

None of those invalidate the result. They just define it correctly.

If I reduce the entire post to one sentence:

The value is not that the model simulates cloth. The value is that it does so in 0.7 milliseconds.