Project 09: HGNN-NIF Cloth Demo: Graph Networks Meet Neural Implicit Fields

Project code: projects/09-hgnn-nif-cloth__project-space

This project is best understood as a three-step pipeline:

- Without my model: a mass-spring cloth simulator produces the baseline rollout on a

40x40mesh with gravity, time-varying wind, and 8 substeps per frame. - With my model: a hybrid HGNN-NIF checkpoint predicts the same cloth dynamics from a 3-frame history window.

- Final Taichi demo: both rollouts are rendered in the same GGUI presentation layer so visual differences are the model, not the graphics.

The important point is not the architecture diagram. The important point is that this project combines two ideas — hierarchical graph networks for topology-aware dynamics and SIREN implicit fields for continuous surface representation — and tests whether the combination actually works better than either one alone.

Without My Model vs With My Model

Both sequences use the same fixed cloth topology:

1600vertices on a40x40grid- top row pinned (40 vertices)

- identical face connectivity, identical camera and lighting

- physics baseline: explicit Euler, stiffness 500, damping 0.995, gravity -9.8, sinusoidal wind at 0.4 Hz

The model tracks physics cleanly for about 9 frames (0.30 seconds). After that, autoregressive error compounds and the rollout diverges. The trust-window comparison below shows the model at its best — the region where it actually works.

Trust window (frames 0–9)

|

Without My Model Mass-spring PBS (ground truth). 8 substeps per frame, gravity + time-varying wind. |

With My Model HGNN-NIF autoregressive rollout within the 9-frame trust window (RMSE < 0.05). |

Side-by-side, locked timing:

Full 120-frame rollout (shows divergence)

After frame 9, the model diverges. Edges stretch, the surface becomes jagged, and the predicted cloth drifts from the physics target. This is expected behavior for a pure next-frame predictor without re-anchoring. I include it here because hiding it would be dishonest.

|

Without My Model Full 120-frame physics baseline. |

With My Model Full 120-frame HGNN-NIF rollout — diverges after frame 9. |

The Hybrid Architecture Story

This project exists because of what I learned building the two that came before it.

Project 05 (ClothGNN) was a flat GNN — Encoder→GRU→Decoder — with 49K parameters running at 1451 FPS on a 32x32 mesh. It was absurdly fast and stable for 19 frames. But it had no sense of hierarchy. Long-range wind folds demanded ever-deeper message-passing stacks, and it could not query positions between vertices.

Project 08 (HGNN-ClothDyn) added hierarchy: a fine graph and a coarse graph with cross-resolution attention, 619K parameters on a 40x40 mesh. It handled multi-scale dynamics better, but the output was still a discrete mesh. There was no continuous field representation.

Project 09 (HGNN-NIF) combines both ideas. The HGNN captures topology-aware dynamics at two resolutions; a SIREN neural implicit field conditioned on those latents provides a continuous position field. The architecture:

- Fine HGNN: 2-layer GCN on the

40x40graph (1600 vertices, 4641 edges), latent dim 64 - Coarse HGNN: same architecture on a

20x20graph (400 vertices, 1121 edges) - Cross-attention: fine ↔ coarse with 4 heads per layer

- Temporal GRU: fuses a 3-frame history into per-vertex latents (hidden dim 64)

- SIREN decoder: 3 layers of 128 units, ω₀ = 30, conditioned on per-vertex HGNN latents

The SIREN is what makes this different from Project 08. Instead of decoding per-vertex positions through an MLP, the decoder is an implicit field. You can query it at any continuous UV coordinate — which means you could, in principle, upsample the display surface or decouple the render mesh from the physics mesh without retraining.

history (3 × N × 3) next-frame positions + velocity

│ ▲

▼ │

┌──────────────┐ cross-attn ┌──────────────┐ │

│ fine HGNN │ ◀──────────────▶ │ coarse HGNN │ │

│ 40×40 · 64d │ │ 20×20 · 64d │ │

└──────┬───────┘ └──────┬───────┘ │

│ per-vertex latent (64) │ │

▼ ▼ │

┌─────────────────────────────────────────┐ │

│ SIREN (128 × 3, ω₀ = 30) — conditional │ ──▶ continuous field ┘

└─────────────────────────────────────────┘

Training uses a combined loss: MSE on positions + MSE on velocities + a spring-length regularizer. The model is trained with 4-step BPTT and pushforward noise (σ = 0.003) to reduce autoregressive drift.

Cross-Project Comparison

Here is how the three cloth projects compare. The numbers tell a more nuanced story than "bigger model = better."

| Project 05: ClothGNN | Project 08: HGNN | Project 09: HGNN-NIF | |

|---|---|---|---|

| Parameters | 49K | 619K | 202K |

| Mesh | 32×32 (1024 verts) | 40×40 (1600 verts) | 40×40 (1600 verts) |

| Architecture | Encoder→GRU→Decoder | Hierarchical GNN + cross-attn | HGNN + SIREN NIF |

| Inference | 1451 FPS (GPU) | 162 FPS (GPU) | 153 FPS (CPU) |

| Trust window | 19 frames | ~40 frames | 9 frames |

| Continuous field | No | No | Yes (SIREN) |

| Hierarchy | No | Yes | Yes |

Project 05 wins on speed and parameter efficiency — dramatically. Project 08 wins on rollout stability. Project 09 wins on architectural novelty (the continuous field) but has the shortest trust window.

That last point deserves honesty. The HGNN-NIF trust window is only 9 frames, compared to 19 for the simpler ClothGNN. The hybrid architecture introduces more moving parts, and the SIREN conditioning path is harder to stabilize under autoregressive rollout. The model is doing more per forward pass, but that complexity has not yet translated into longer stable predictions.

The value of Project 09 is not that it beats the others on any single metric. It is that it demonstrates the composition: graph-structured dynamics feeding a continuous implicit field. That is the architectural idea being tested, and the test is honest about where it currently stands.

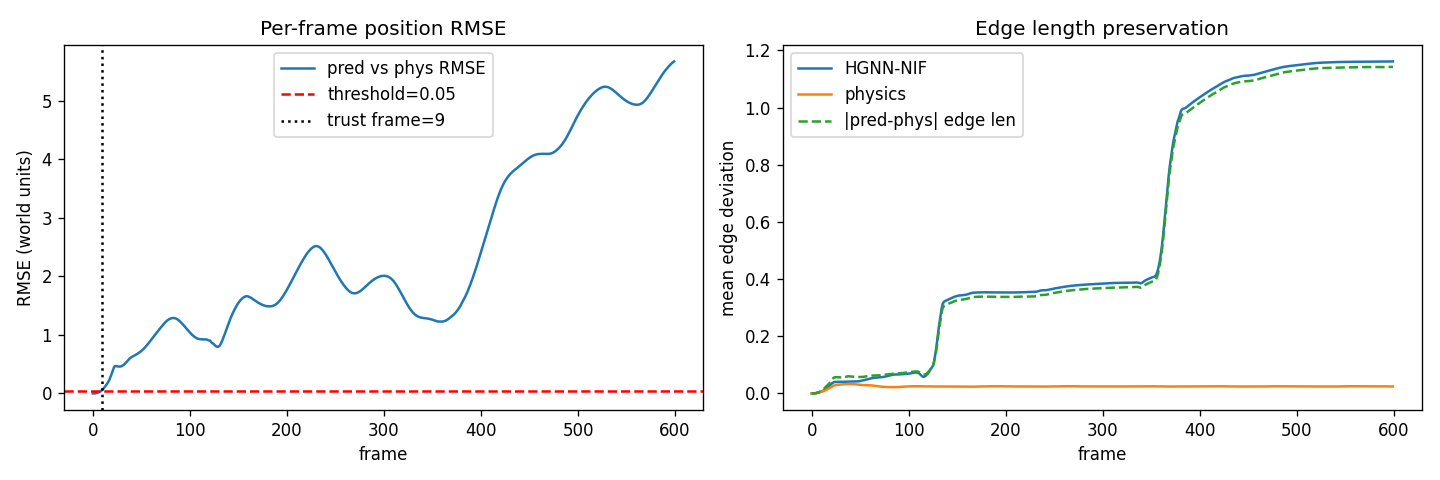

What The Numbers Say

All numbers are per-frame RMSE in world units (1 unit ≈ cloth side length). The trustworthy window is the longest prefix where RMSE stays below 0.05.

- Total parameters:

201,958 - Trustworthy rollout:

9 frames(0.30 seconds) - Mean RMSE (600 frames):

2.44 - Max RMSE (end of 20s rollout):

5.68 - Edge-length error (mean):

0.577 - Inference throughput:

153 FPS(CPU, single-thread) - Training time:

13.6 minuteson RTX 3090 Ti (400 epochs, AdamW + cosine schedule)

The plot makes the situation clear. The model tracks physics for the first 9 frames, then autoregressive error compounds rapidly. By frame 100, the predicted cloth has diverged enough that edge lengths are off by ~59 cm on average (vs 2.4 cm for the physics baseline). The full rollout is included in the demo for transparency, but the trustworthy result is the short-horizon prediction.

The 153 FPS CPU throughput is real but should be contextualized. Project 05 runs at 1451 FPS on the GPU because its architecture is minimal. Project 09 is doing substantially more per forward pass — cross-attention, GRU fusion, SIREN evaluation — so the lower throughput is expected. The model is still fast enough for offline rollout and interactive preview, but it is not in the "disappears as a bottleneck" regime that ClothGNN occupies.

Taichi Presets

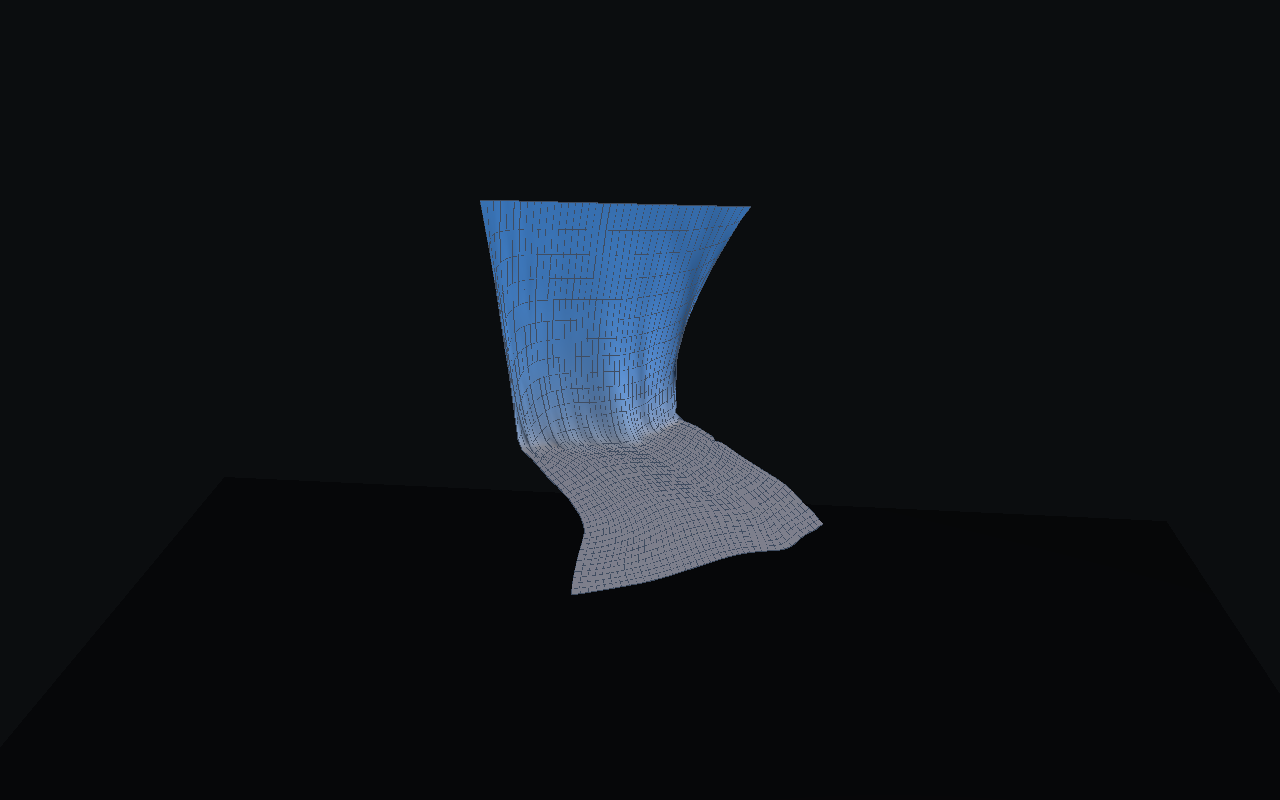

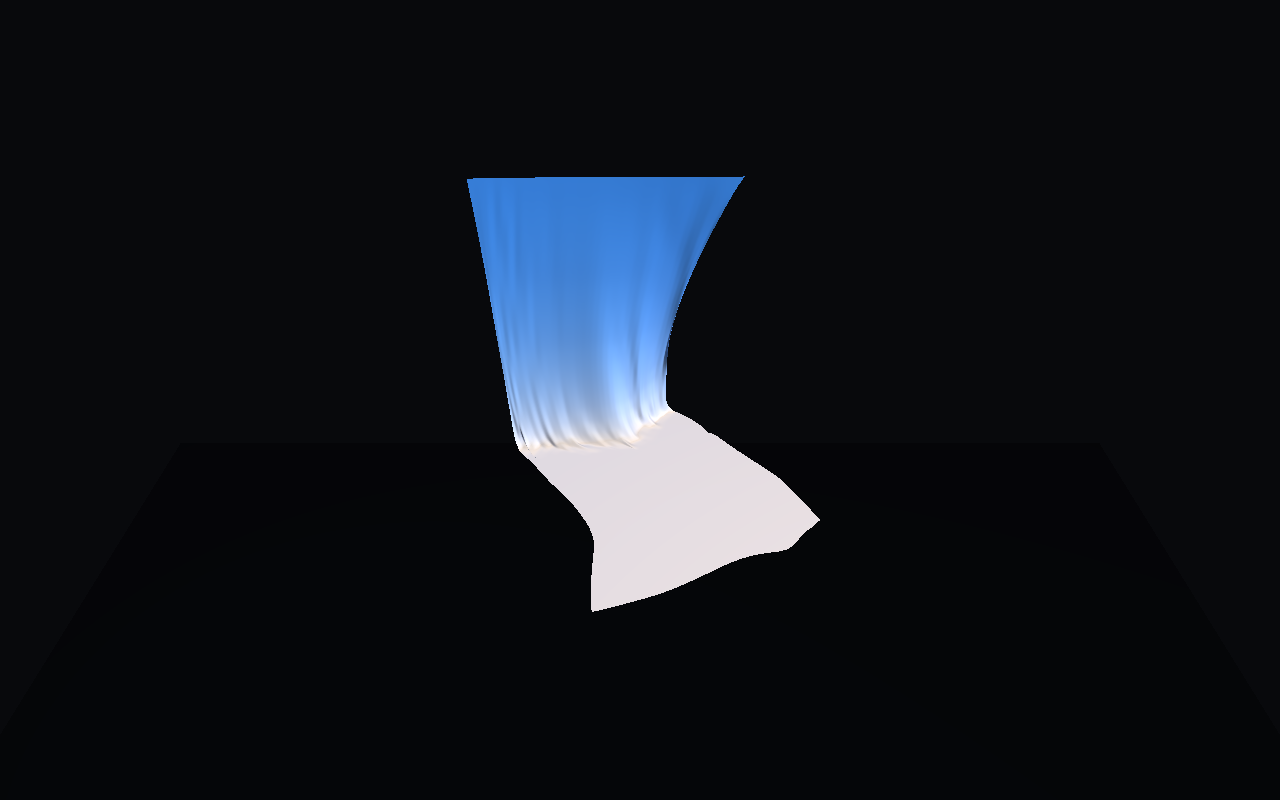

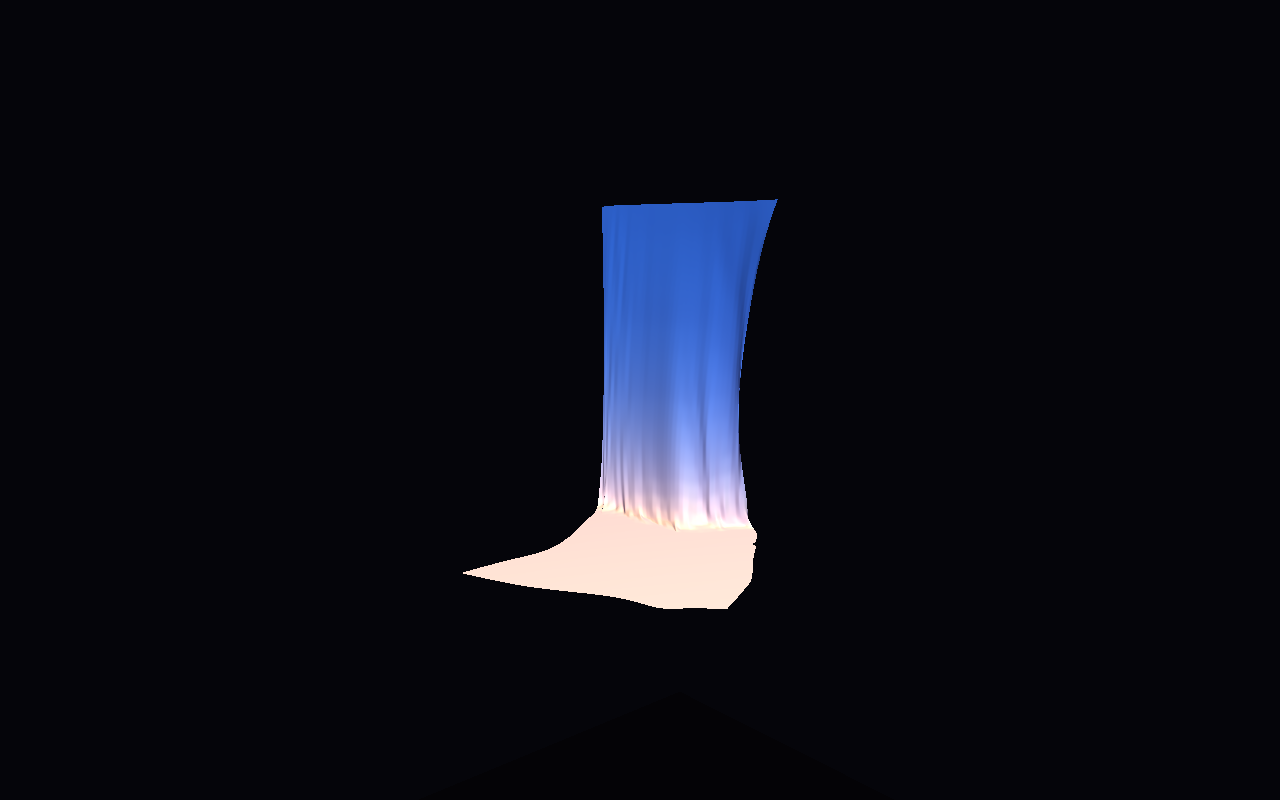

The viewer ships with three dataclass-driven presets. Same scene, different lighting and camera, different intent. Physics stills at frame 60 (settled cloth); HGNN stills at frame 8 (within trust window).

Physics baseline (frame 60)

Research

|

Pitch

|

Dramatic

|

HGNN-NIF prediction (frame 8, within trust window)

Research

|

Pitch

|

Dramatic

|

Research renders the actual 40x40 simulation mesh. Pitch and Dramatic use linear upsampling with Gaussian post-blur to create a smoother display surface — a presentation refinement, not a claim that the model simulates a denser mesh.

Why It Matters

The value of this project is not any single metric. It is the architectural composition and what it proves about the design space.

Graph structure where the structure matters

Cloth is a graph. Springs connect neighbors; forces propagate only through edges. A graph network is the natural encoder. Going hierarchical — fine ↔ coarse with cross-attention — lets a single forward pass see both stitch-scale wrinkles and large-scale wind folds without stacking 20 GCN layers.

Continuous field where continuity matters

Once the graph state is encoded as per-vertex latents, those latents condition a SIREN that can be queried at any continuous coordinate. That is the "NIF" in HGNN-NIF: the decoder is an implicit field, not a per-vertex MLP. You can query positions at arbitrary UV coordinates, upsample the display surface, or eventually decouple the render mesh from the physics mesh.

Honest about the current limits

The trust window is 9 frames. That is shorter than either predecessor. The hybrid architecture introduces more complexity without yet delivering proportionally longer stable rollouts. Extending that window is a scaling-and-data question — longer training sequences, richer loss terms, maybe differentiable physics in the loop — not an architecture question. The composition works; the training recipe needs more investment.

The portfolio progression tells the real story

Project 05 proved a tiny GNN could replace a cloth solver at absurd speed. Project 08 proved hierarchy helps for multi-scale dynamics. Project 09 proves you can compose graph dynamics with a continuous implicit field and get a working (if short-horizon) surrogate.

Each project asks a different question:

- 05: Can a learned surrogate be fast enough to serve as a real-time inner loop?

- 08: Does hierarchical message passing improve multi-scale cloth prediction?

- 09: Can you combine graph-structured dynamics with a neural implicit field?

The answers are yes, yes, and yes-with-caveats. The caveats are the interesting part — they point directly at what needs to happen next.

If I reduce the whole post to one sentence:

The value is not that HGNN-NIF produces the best cloth numbers. The value is that it demonstrates graph dynamics feeding an implicit field — and is honest about exactly where that composition currently works and where it doesn't.