Project 13: NIF-Cloth4D Demo: Continuous Cloth Geometry from a Neural Implicit Field

Project code: projects/13-nif-cloth4d__project-space

This project is best understood as a three-step story:

- Without my NIF: a physics-baseline cloth sequence exists as discrete meshes sampled at fixed timesteps.

- With my NIF: the geometry is learned as a continuous signed distance function

f(x, y, z, t). - Final Taichi demo: that learned field is resampled onto a fixed-topology heightfield mesh and presented as a polished real-time render.

The important point is not that the final video looks nicer. The important point is that the representation of the cloth changes. A traditional solver gives you a stack of meshes at discrete frames. The NIF gives you a callable geometry function.

Without My NIF vs With My NIF

The comparison that matters is not "slow solver vs fast network." It is "discrete mesh sequence vs learned continuous SDF."

|

Without My NIF Physics-baseline cloth sequence, sampled directly as discrete frames. |

With My NIF Trained neural implicit field queried at the same timestamps. |

The left side is the target. The right side is the learned surrogate. What matters is that the learned field is not producing plausible-looking fabric noise. It is preserving recognizable drape, fold timing, and surface structure strongly enough to stay faithful to the reference dynamics.

Why This Matters

Here is the strongest way to frame the project:

This work replaces a discrete cloth mesh sequence with a continuous neural SDF that can be queried at arbitrary space-time coordinates, then rendered in real time as a stable surface demo.

That matters for a few reasons.

1. A mesh sequence becomes a function

Classical cloth solvers give you a stack of triangle meshes at fixed timesteps. You run the solver, store the frames, and work with those samples. The NIF instead learns f(x, y, z, t) → SDF directly. That means the result is no longer just a sequence of meshes. It is a callable geometry function.

This is the core representation shift in the project:

- without the NIF, the cloth is a stored mesh sequence

- with the NIF, the cloth becomes a reusable function

That is not a cosmetic improvement. It changes what can be done with the result.

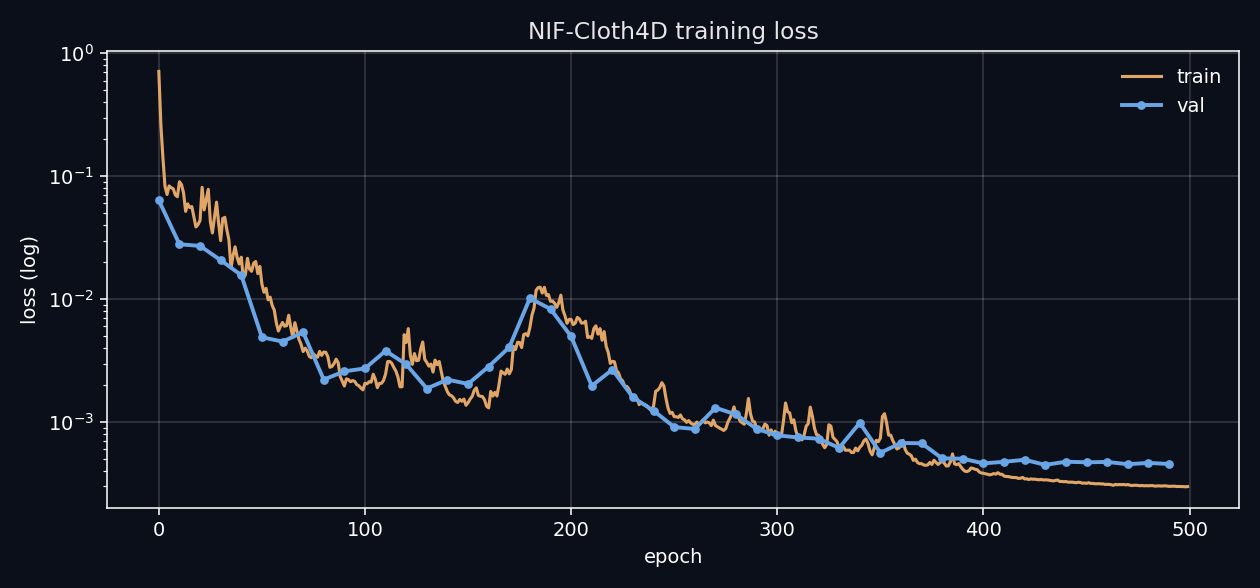

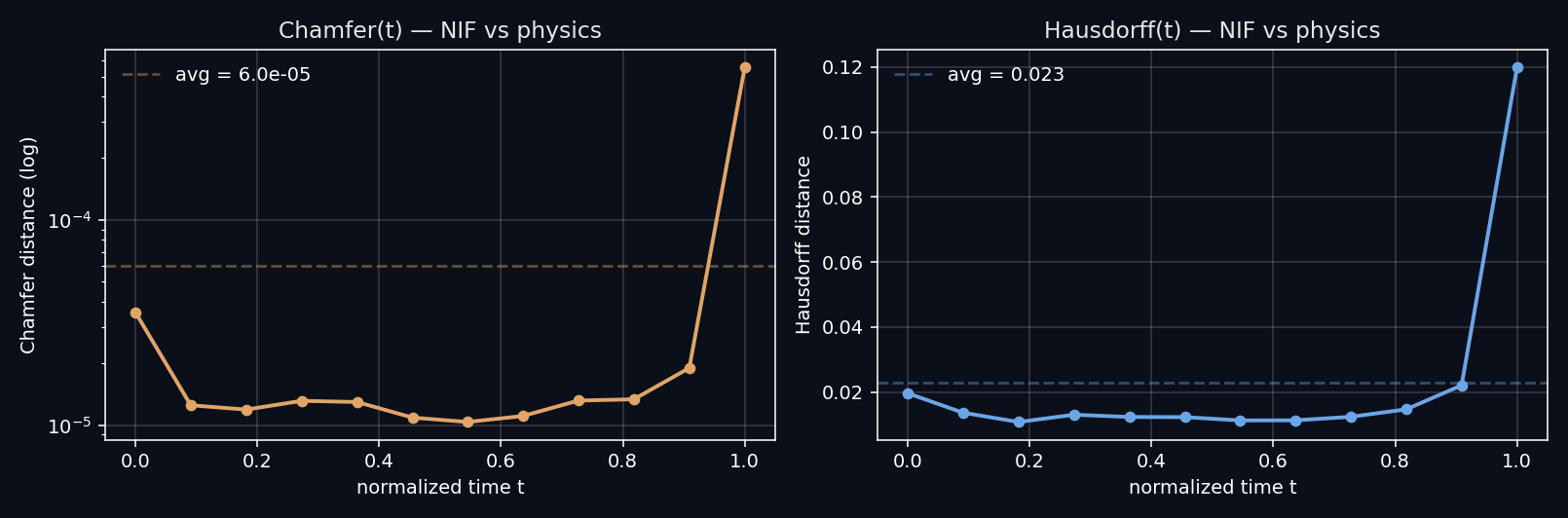

2. The numbers show it is fitting geometry, not noise

The learned model is not being judged by appearance alone.

- Average Chamfer distance:

6.00e-5 - Average Hausdorff distance:

0.0228 - Final validation loss:

4.57e-4 - Model size:

330,497 params / 3.80 MB - Architecture: SIREN 5×256, ω₀=30, 4D spacetime input

Those values show that the network is approximating the reference surface with enough fidelity to preserve fold shape and temporal drape structure. The point of the metrics is not decoration. The point is to demonstrate that the learned field remains geometrically coherent enough to trust as a surrogate.

3. One checkpoint drives the whole pipeline

One trained .pt file now drives:

- mesh extraction at arbitrary resolution

- offline evaluation plots

- motion assets for the site and post

- the final Taichi surface demo

- future interactive sampling tools

That is the underrated architectural win here. The learning step and the presentation step are decoupled. Once the field is learned, the same representation can be resampled, re-meshed, and repackaged without rerunning the original solver.

4. Simulation and rendering are no longer tied together

In a traditional cloth workflow, solver resolution and render resolution are tightly coupled. With the NIF, rendering becomes a sampling problem. The learned SDF can be queried at whatever grid resolution is useful for validation, visualization, or interaction.

That is what enables the Taichi demo to exist as a clean endpoint instead of a brittle export trick.

5. The Taichi demo closes the loop

The final surface render is not just presentation polish. It is evidence that the learned SDF is stable enough to be remapped onto a fixed-topology mesh and played back in a real-time viewer. If the learned field were noisy or temporally incoherent, the surface would expose that immediately through pops, jitter, or staircase artifacts.

So the Taichi demo is doing more than looking good. It is validating that the learned representation survives contact with a more demanding presentation layer.

Final Taichi Demo

The final presentation path samples the learned SDF on a regular x-y grid, extracts the vertical z at which the field crosses zero, and renders it as one fixed-topology 128×128 heightfield mesh (16,384 vertices, 32,258 faces) in Taichi GGUI.

It matters because it demonstrates the full pipeline:

physics solver -> learned SDF -> continuous resampling -> real-time surface render

And it does that in a way that reads clearly to both technical and non-technical viewers.

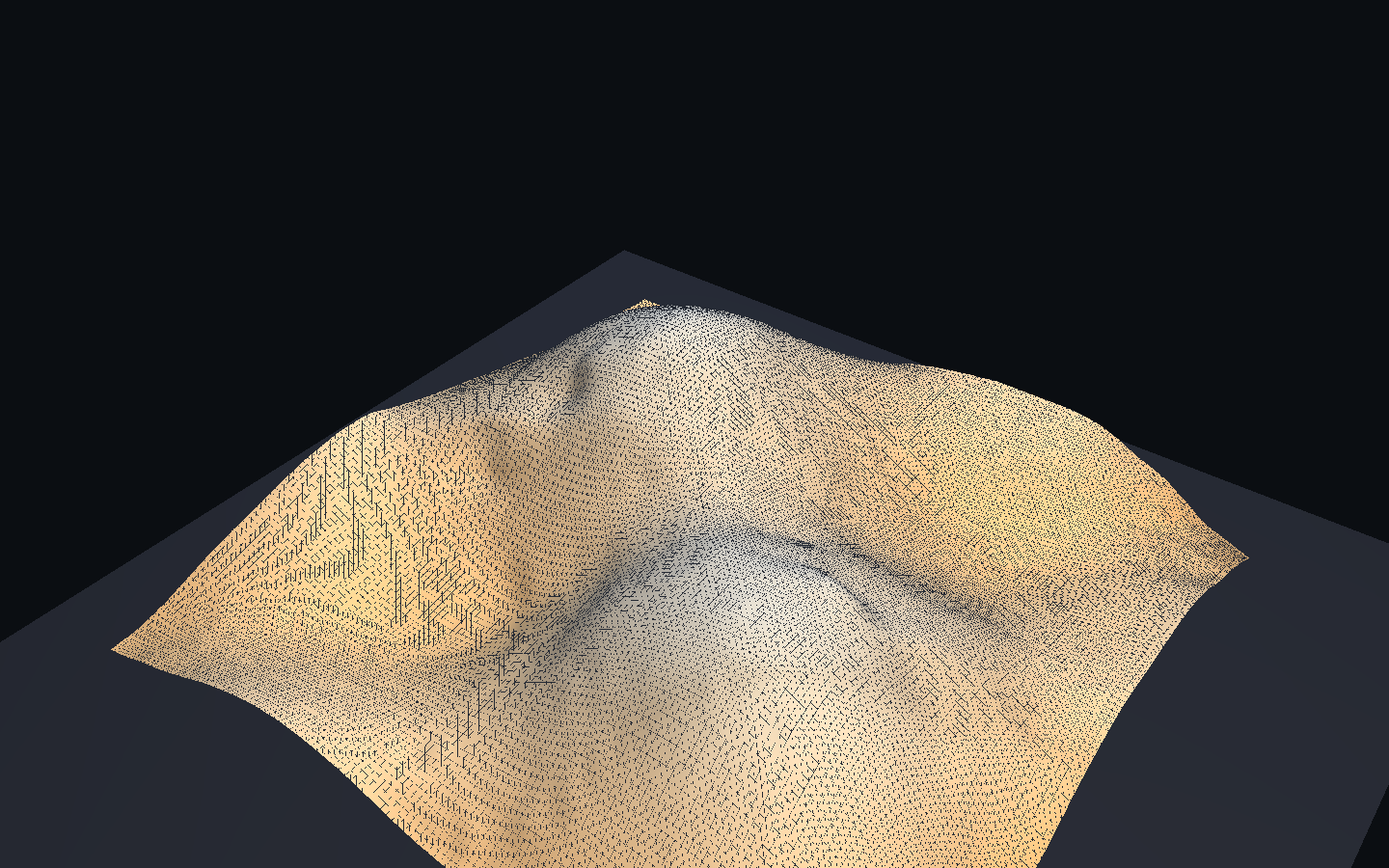

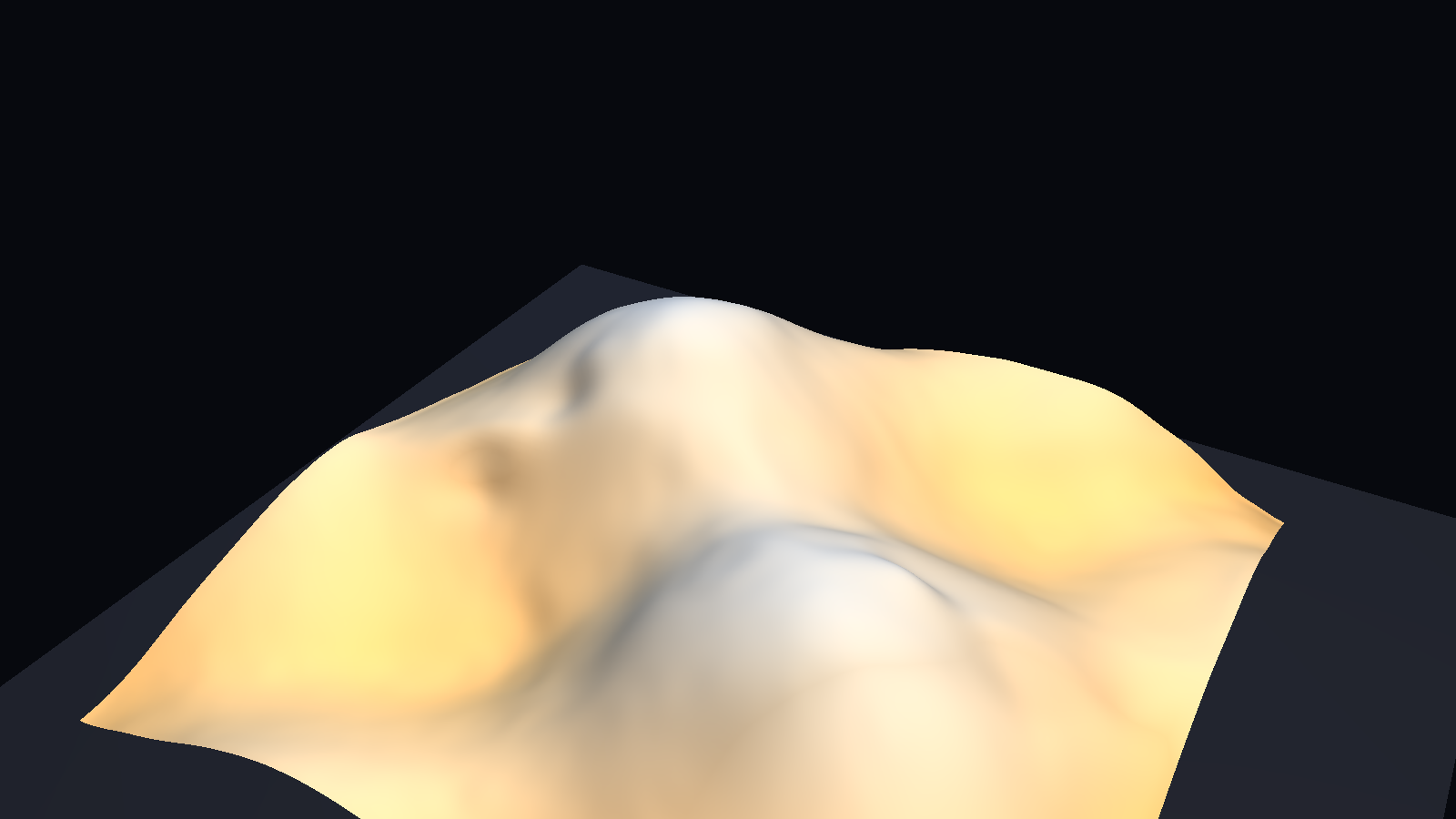

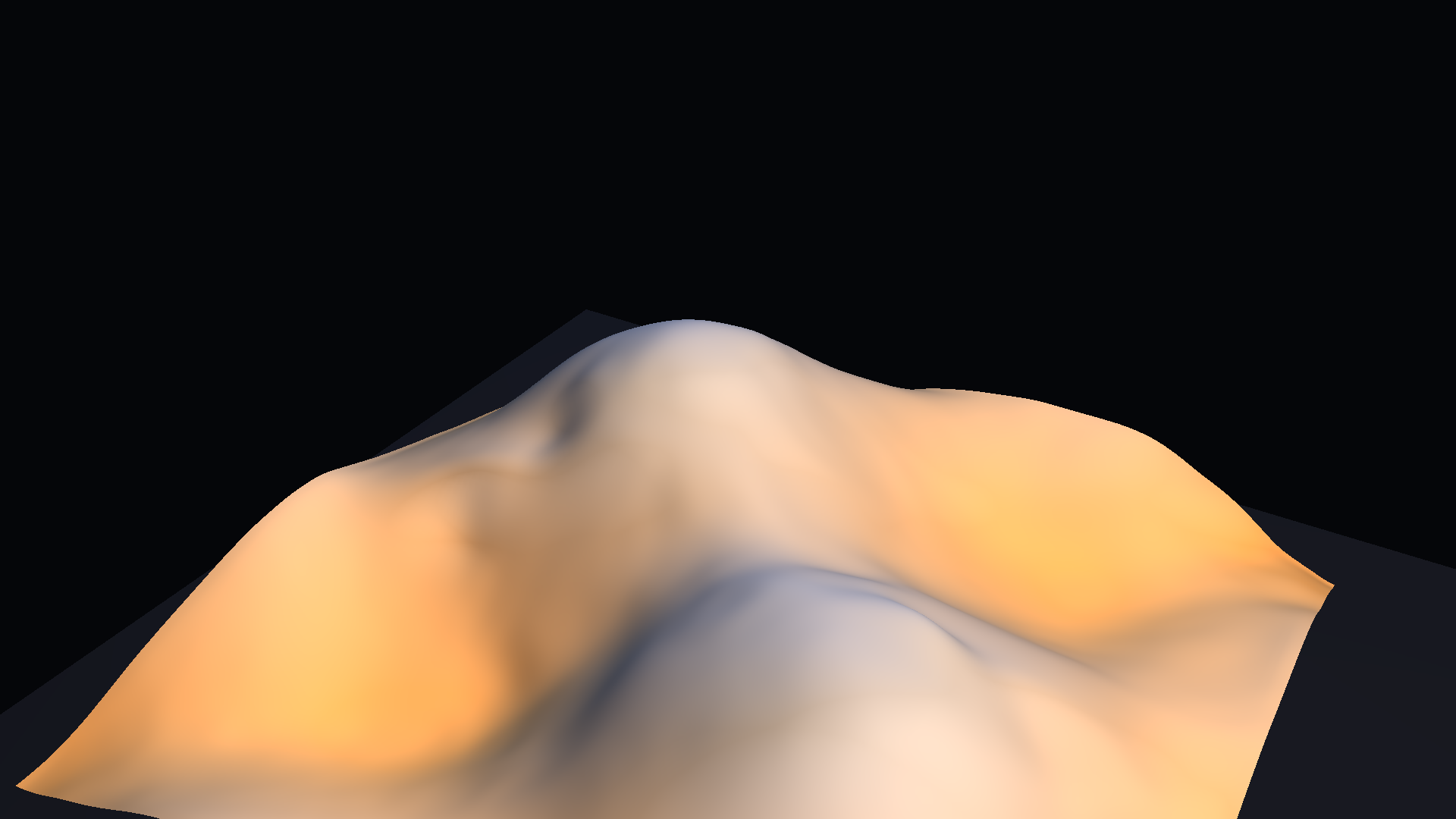

Taichi presets

Research

|

Pitch

|

Dramatic

|

Research shows the wireframe over the learned surface so the mesh structure is legible. Pitch is the hero camera, tuned for a calm warm-key / cool-fill lighting rig against a dark background. Dramatic is a closer angle that emphasizes fold depth and the height-diverging colormap.

|

|

The Bigger Picture

Neural implicit fields matter because cloth simulation is expensive, discrete, and often hard to repurpose once a sequence has been solved. This project shows a more useful workflow:

- generate a trustworthy reference mesh sequence

- learn a compact continuous SDF surrogate

- resample it arbitrarily in space and time

- present it interactively or offline from the same checkpoint

That is why this project is more than a visualization exercise. The neural representation is the actual product of the pipeline. The demo is proof that it works.

What Would Make This More Realistic

The current result is strong as a continuous cloth-SDF surrogate demo, but it is still not the strongest possible version of a realistic learned cloth system.

The main realism limit is that the learned field is still tied to a fairly clean synthetic reference sequence and a presentation pipeline that deliberately smooths and bounds the surface so the result reads clearly. That is the right choice for a demo, but it also means there is still headroom between "convincing technical geometry demo" and "fully rich cloth surrogate."

Realism has to improve across the whole stack

For this project, realism is not just about making the Taichi surface prettier. It depends on several layers at once:

- Reference physics: the target cloth needs rich, physically meaningful behavior.

- Field representation: the model needs to preserve fold depth, crease sharpness, and surface continuity without collapsing into blur or staircasing.

- Temporal fidelity: the learned field needs to stay coherent across time, not just fit isolated frames.

- Sampling and resampling: the continuous-field promise has to hold up when queried away from the exact training grid and training timestamps.

- Presentation: the final surface should reveal the learned structure, not hide weak structure behind styling.

If any one of those layers is weak, the whole result reads as weaker than it really is. A good-looking surface cannot rescue a noisy field. A clean field that is only valid on one fixed sampling grid does not fully cash out the continuous-field claim.

1. Make the reference process physically richer

The learned model can only be as physically rich as the reference sequence it is trained against.

To make this project more realistic overall, the baseline cloth process could be improved with:

- real FEM or XPBD cloth instead of the current synthetic falling-cloth generator

- stronger material diversity (stiff vs soft, stretchy vs inextensible)

- actual self-collision and contact with obstacles

- wind and external force variation

- longer sequences with richer fold evolution

- multi-garment or multi-layer cases

That would make the learned field approximate something closer to a broader class of real cloth behavior instead of one relatively clean family of reference meshes.

2. Preserve sharper spatial and temporal structure

The current model is already coherent, but it still leaves some headroom in fine-scale detail and crease sharpness.

The next realism gains would likely come from:

- sharper crease and fold edges

- less residual softness in the learned surface

- stronger fidelity on rapid temporal changes (snaps, releases)

- better preservation of thin-shell curvature

That could be improved with:

- richer Fourier-feature or multiscale encoding of

t - explicit supervision on surface normals and curvature, not only SDF values

- losses that care about local Hausdorff, not only average Chamfer

- hybrid objectives combining supervised SDF fit with eikonal and physical residual structure

This matters because cloth often looks "almost right" numerically while still feeling slightly too soft or slightly too melted when mapped onto a surface.

3. Strengthen the continuous-field claim directly

One of the most important promises of the project is that the learned object is a function f(x, y, z, t), not just a cache of frames.

That claim becomes more realistic and more meaningful when it is tested more aggressively:

- query at unseen intermediate timestamps

- query at finer spatial grids than training

- render at multiple resolutions from the same checkpoint

- evaluate interpolation quality between training frames

- test whether the SDF remains valid (eikonal, single zero level-set) off the training lattice

That would move the project from "a model that fits the training frames well" to "a field representation that really behaves continuously."

4. Improve longer-horizon temporal coherence

The current Taichi demo is convincing because the learned field is stable enough to survive a real-time surface viewer. That already matters.

But if I wanted the result to feel more realistic in a deeper sense, I would push further on:

- fold persistence over longer time windows

- surface continuity across the training/extrapolation boundary

- reduction of temporal jitter in fine detail

- stronger agreement of drape timing against the solver

Cloth is unforgiving. A surface can look good in stills and still feel subtly wrong once it is played as a mesh over time.

5. Make the final surface closer to physical intuition

The viewer is already doing the right kind of restrained presentation work, but realism could still improve further with:

- better amplitude calibration between SDF gradient and surface normals

- physically motivated shading (cloth-specific BRDF, subsurface hints)

- more faithful height transfer instead of the diverging colormap trick

- optional stress or strain visualization driven by field derivatives

- clearer relation between the learned SDF and the displayed geometry

Again, this should stay downstream of the learned field itself. Better rendering should help reveal the physics, not substitute for it.

If I compress the realism roadmap to one line, it is this:

The path to a more realistic NIF-Cloth4D is richer reference physics, sharper SDF fidelity, stronger off-grid continuity, and only then more polished surface presentation.

What Would Improve The Project Overall

If I step back from realism alone and ask what would make this a stronger project overall, the answer is broader than "make the cloth look nicer."

The core center of gravity here is representation shift. The project matters because it turns a discrete mesh sequence into a callable SDF. So the best improvements are the ones that make that shift more rigorous, more useful, and more obviously valuable.

1. Prove the continuous-field claim more aggressively

The single most important project improvement would be to show that the model is useful specifically because it is a field, not just because it fits a known frame set well.

That means adding demonstrations like:

- low-resolution vs high-resolution mesh extraction from the same checkpoint

- intermediate-time sampling between known frames

- arbitrary camera or mesh resolutions from the same field

- gradient queries for normals and curvature straight from the network

That would make the representation shift much more tangible.

2. Expand the evaluation beyond scalar fit metrics

The current metrics are good and important, but the project would be stronger with a broader evaluation lens.

Useful additions would include:

- per-region Chamfer (hem, crease, interior)

- normal-consistency error

- eikonal-residual diagnostics

- temporal-coherence metrics

- off-grid interpolation tests

- resolution-transfer tests

That would help show not only that the surface is close numerically, but that it behaves correctly in the ways that matter for cloth.

3. Add ablations that explain what is doing the real work

The project would become more defensible if it showed which ingredients matter most.

For example:

- plain MLP vs SIREN

- different ω₀ values

- with and without eikonal regularization

- with and without physics-informed stretch/bend losses

- different training frame counts

- with and without spatial smoothing in Taichi

That would sharpen the project from "strong demo" into "clear technical result."

4. Build out the query and tooling story

This project becomes much more valuable when the learned SDF is treated as a real reusable artifact.

That means better downstream tools such as:

- a simple field-query CLI

- normal and curvature extraction utilities

- arbitrary-resolution export helpers (OBJ, USD)

- interactive sampling examples

- compact deployment benchmarks for the 3.80 MB checkpoint

Those would make the field feel like a product-grade object rather than only a training outcome.

5. Connect the representation shift to concrete use cases

The "who cares?" question lands harder when the project is attached to real workflows.

For example:

- VFX simulation compression (store the network, not the cache)

- fast previs from compact representations

- learned garment libraries

- interactive educational tools for cloth physics

- future inverse or parameter-estimation tasks built on the same field

That is where the project moves from "nice NIF demo" to "useful learned simulation object."

6. Turn the post itself into a stronger technical artifact

The writeup could carry more of the technical argument directly.

To make the post stronger, I would add:

- one explicit representation-shift diagram

- one intermediate-time resampling demonstration

- one off-grid mesh-resolution example

- one compact methodology note on the supervised SDF path

- one short failure or limitation strip

That would make the page more self-contained and much more persuasive to a skeptical reader.

If I Were Continuing This Project

If I had another serious pass on NIF-Cloth4D, I would prioritize the work in this order.

Priority 1: make the continuous-field advantage undeniable

The biggest next step would be to show arbitrary-time and arbitrary-resolution cloth extraction more aggressively, because that is the project's strongest idea.

Priority 2: make the target dynamics richer

After that, I would broaden the reference cloth process so the learned field has more physically interesting structure to approximate — real FEM/XPBD cloth, contacts, and material variation.

Priority 3: sharpen crease and high-frequency fidelity

This is where the project would get more visually and geometrically convincing at the same time, through normal-aware supervision and richer temporal encoding.

Priority 4: strengthen downstream tooling

The more reusable the learned SDF becomes, the more the project reads as a practical representation system rather than only a demo.

Honest Current Limitations

The main current limitations are:

- the reference process is a synthetic falling-cloth generator, not a high-fidelity physics solver

- the learned field still leaves some headroom in crease sharpness and fine fold detail

- the final Taichi demo uses restrained spatial smoothing and a fixed-topology heightfield to keep the surface readable (not a full marching-cubes extraction per frame)

- the strongest evidence is still on the specific learned sequence showcased here, not yet on a wide family of cloth problems

None of those invalidate the project. They just define its current scope honestly.

If I reduce the entire post to one sentence, it is this:

The value is not that the project simulates cloth nicely. The value is that it turns the cloth simulation itself into a continuous, reusable geometric object.